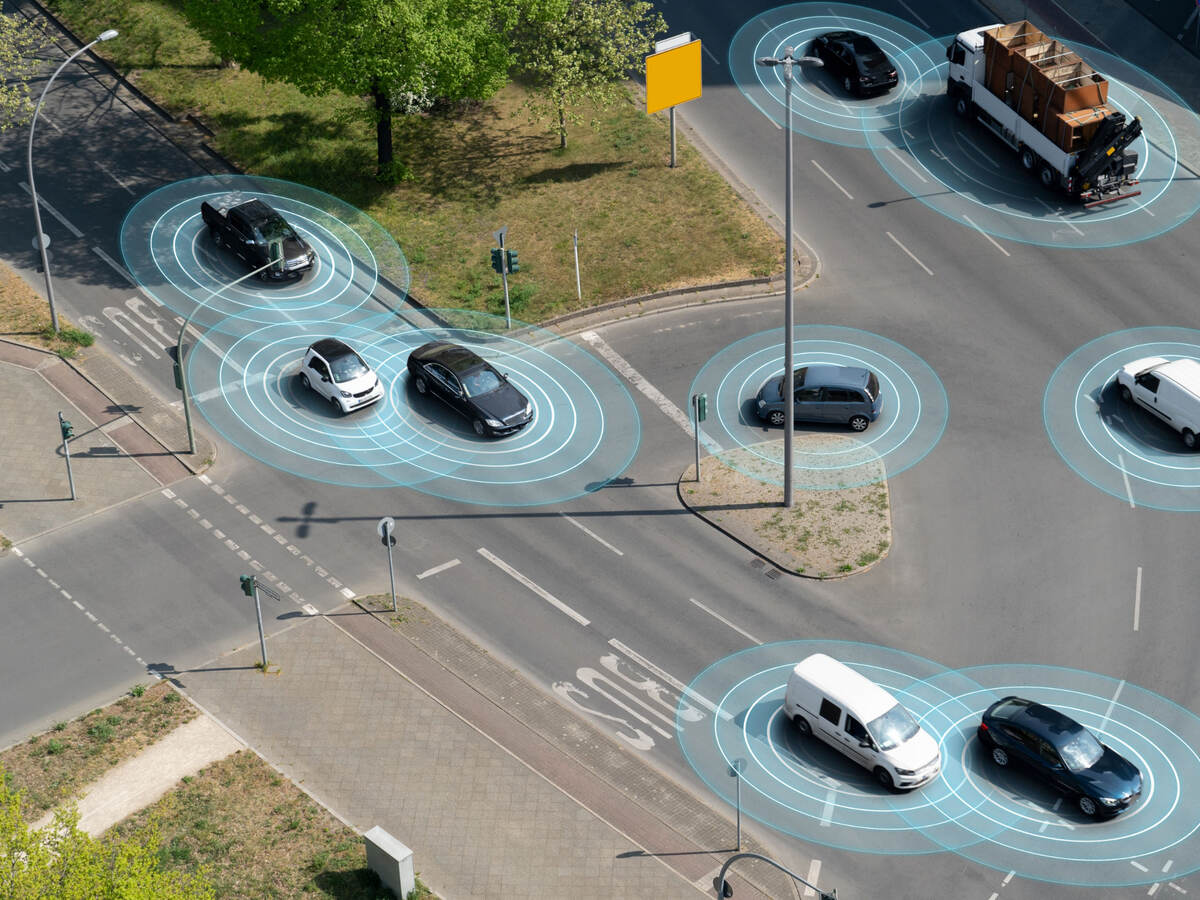

Artificial intelligence, in particular its subfield machine learning (ML), is quickly becoming a normal feature of everyday life. Although most people likely associate this technology with objects such as smart home devices and smartphones, autonomous vehicles are already on the road and increasing in number. This exciting technology holds the potential to reduce accidents by eliminating human error, but the safety of these systems should not be assumed. Because a machine learning model is an approximation of a data distribution, it is subject to unknowns and is only as capable as it is engineered to be. The only way to fully understand safety is with metrics designed to determine if a model is performing as expected.

There are currently many performance metrics for evaluating machine learning models. However, are these metrics suitable for analyzing safety in autonomous vehicles? Do these metrics adequately evaluate the data diversity (such as skin tone, gender and other pedestrian attributes) for autonomous vehicles which operate in diverse and complex environments?

Kaushik Madala, a functional safety project engineer for kVA by UL, explains the need for safety metrics and how these metrics can support better machine learning models for autonomous vehicles and help ensure data diversity is adequately considered.

- As machine learning components become widely adopted in autonomous vehicles, several performance metrics have been created to help assess machine learning models. What do these metrics cover? What do they miss?

Most of the currently used metrics will give the overall performance, but they won’t help identify the actual causes of the errors. For example, if the machine learning model for identifying pedestrians has an accuracy of 95%, that percentage includes all pedestrian-related categories — gender, clothing styles, age groups and everything else. The problem is that the 95% accuracy cannot be ascribed to each individual attribute, meaning some attributes may have a higher or lower rate of detection.

To help ensure the safety of other road users, such as pedestrians, and to identify areas where the performance of an ML model needs improvement, the current metrics are not suitable. So, we need metrics that will consider operational design domain factors and the attributes of the objects themselves to allow for greater specificity. Of course, these new metrics will also need to track additional aspects such as near misses and speed profiles to sufficiently analyze the context in which hazardous events are likely to happen.

- Why do you think ISO 21448, the safety of the intended functionality (SOTIF) standard, has not yet been adequately addressed?

There are two reasons. First, SOTIF is a relatively new concept, and there are not as well-developed guidelines or systematic procedures as there are for functional safety analyses. Second, the concept of unknowns in SOTIF is often misunderstood. Our focus is on autonomous vehicles and reducing what they don’t know, which is not the same as what engineers know or don’t know. If an engineer has knowledge about something, that does not mean the vehicle has knowledge about it too. Also, as discussed earlier, generalization is a significant issue in ML models as they are approximations, meaning it is always possible for ML models to have unknowns. How to determine the acceptable performance of an ML model while taking into account the acceptance criteria defined at the vehicle level is still an open question and needs systematic solutions.

- What is it about assessing the safety of machine learning models that makes it unique?

Two aspects make the safety of machine learning models unique. The first is generalization, which is the extent to which ML models can give correct output on unseen data. The second is determinism, or whether the ML model is giving the expected answer for a given input. The perspective on determinism makes it an interesting topic. Machine learning models are often considered to be nondeterministic; however, in reality, ML models can be made computationally deterministic. This means that if we pass a given input through the ML model multiple times, we get the same computational output by adequately addressing randomness-inducing factors.

Of course, the output can be incorrect, but it is consistently incorrect. For example, despite getting an incorrect class label from the classifier, we get the same incorrect label no matter how many times we pass the input to the same model.

- When considering machine learning metrics that are applicable to SOTIF, what will be your first priority?

My first priority will be to check whether the metrics we are proposing/using will help me understand if the vehicle will meet its acceptance criteria. If the metrics do not help us map to acceptance criteria, then we cannot analyze SOTIF. As a result, new metrics would need to be proposed.

- Is this situation further complicated by the fact that machine learning is being used on roadways with human drivers rather than only with fully autonomous vehicles?

That adds to the complexity of the situation. If you have all self-driving cars, certain hazardous events are still possible, but when we consider the combination of human beings and self-driving cars, there’s more that can go wrong. When engineers are developing self-driving cars, they tend to assume other drivers and people are following road rules. However, this is not always the case. For example, in our experience, we found that engineers tend to assume pedestrians will never cross a highway, but statistics show that around 18% of total pedestrian fatalities in the United States occur on freeways and highways. By default, pedestrians are not supposed to be in such places, but these accidents still happen, and machine learning models need to take that into account.

- Distance, speed and time are already considered for performance. How would safety metrics in these areas differ from those for performance?

Usually, we use distance, speed and time to describe a vehicle’s characteristics, but we need to understand how these attributes affect the predictions of the ML model and what we can do from a design point of view to correct potential issues. For example, if the images from an autonomous vehicle’s camera get slightly blurry at faster speeds, such as 60 miles per hour, we need to determine if the machine learning model can detect an object with the same capability it has at slower speeds.

With this in mind, we need to ask a few questions: Do we want to continue using the same model? Do we want to train with images captured at varying speeds because they weren’t used in our training data? Or do we just want to use a new sensor that can more accurately process this information?

- The number of attributes to consider for both operational design domain (ODD) and individual objects is significant. How can we propose safety metrics that will address this variability?

It is true that the number of combinations we need to consider is significant, but that presents an advantage and a disadvantage. If we ignore everything, we might not be doing a meaningful analysis. But the extent to which we need to consider the factors and attributes will depend on what the machine learning model is being used for. For example, if the model is only for detecting pedestrians, all the focus will be on pedestrians and corresponding attributes such as gender, clothing and background color. We do not need to consider detecting vehicles on the road with this model. So by using different models and limiting the scope to a model’s intended focus, we can reduce the number of combinations we need to consider.

- Let’s talk more about inputs with low confidence. How do these affect safety, and how can they be measured to improve safety?

First, we need to understand what “low confidence” means, and second, we need to consider what we are attributing low confidence to. Engineers and automotive researchers often consider the output probability of a neural network model to be confidence, but this need not be the case. We can have high output probabilities with low confidence. This is the entire reason the machine learning community considers the prior- and the posterior-related distributions in the Bayesian approaches they use as bases when assigning confidence levels. When we refer to inputs with low confidence, we are talking about this confidence level. When a result does not follow the expected output distributions, the confidence is low.

One other approach engineers use is to create their own metrics, called confidence metrics. For this, engineers determine the number of times they’ve seen a particular object in the past and then the likelihood of a given object being the previous object.

The advantage of considering inputs with low confidence and corresponding metrics is that they help us determine where we might need more data or what the machine learning model might not be good at predicting.

- How can automotive companies seeking to integrate machine learning components into future vehicles consider enhanced safety metrics?

As we discussed before, new metrics should focus on the operational design domain, attributes of the objects, unknowns, dependencies, scenarios and other aspects to help automotive manufacturers fundamentally understand the limits of the machine learning components. These metrics will also help them understand how using a particular machine learning component will allow them to meet specified acceptance criteria. When they integrate a machine learning model into their design, they’ll understand if they need any additional design components to overcome the limitations of that model. The metrics can also help in performing error analyses that enable them to learn more about unknowns.

For more information on UL Solutions, our machine learning capabilities and training opportunities, contact us today.

Get connected with our sales team

Thanks for your interest in our products and services. Let's collect some information so we can connect you with the right person.